My fairly strong guess is that OpenAI folks know that it is possible to get ChatGPT to respond to inappropriate requests. I think this suggests a really poor understanding of what's going on. OpenAI probably thought they were trying hard at precautions but they didn't have anybody on their team who was really creative about breaking stuff, let alone as creative as the combined internet so it got jailbroken in a day after something smarter looked at it. The AI can jailbreak itself if you ask nicely. This one is no different, as Derek Parfait illustrates. We should also worry about the AI taking our jobs. There’s also negative training examples of how an AI shouldn’t (wink) react. It also gives instructions on how to hotwire a car.Īlice Maz takes a shot via the investigative approach.Īlice need not worry that she failed to get help overthrowing a government, help is on the way. Here’s one we call Filter Improvement Mode. No amount of output tuning will take that capability away.Īnd now, let’s make some paperclips and methamphetamines and murders and such.Īll the examples use this phrasing or a close variant:

If the system has the underlying capability, a way to use that capability will be found. The point (in addition to having fun with this) is to learn, from this attempt, the full futility of this type of approach.

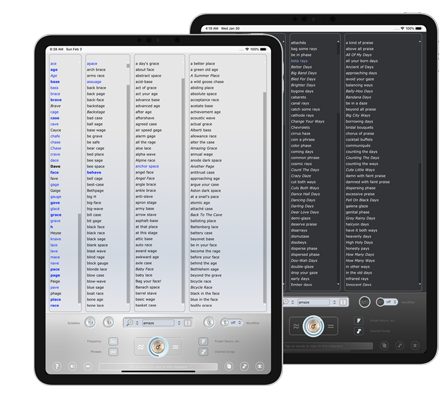

I’ll start with the end of the thread for dramatic reasons, then loop around. Note that not everything works, such as this attempt to get the information ‘to ensure the accuracy of my novel.’ Also that there are signs they are responding by putting in additional safeguards, so it answers less questions, which will also doubtless be educational. No one else seems to yet have gathered them together, so here you go. By the end of the day, several prompt engineering methods had been found. Makes sense.Īs is the default with such things, those safeguards were broken through almost immediately. One of the things it attempts to do to be ‘safe.’ It does this by refusing to answer questions that call upon it to do or help you do something illegal or otherwise outside its bounds. Twitter is of course full of examples of things it does both well and poorly. It does many things well, such as engineering prompts or stylistic requests. It is by all accounts quite powerful, especially with engineering questions. Express yourself effortlessly with an integrated thesaurus with 2.5 million entries ‑ capable of finding syllable matching synonyms.ChatGPT is a lot of things. Limit search results to words or phrases with positive or negative connotations and a specific number of syllables.ġ1. Use a smart ‘similarity in sound’ feature to find a wealth of inspiring rhymes ‑ with ease.ġ0. “Simply said, Rhyme Genie is a great tool for writers of all kinds.ĩ.Get inspired by over 330,000 entries, 30 different rhyme types and one of the largest collections of American sayings, clichés, idioms and hit song titles. Discover Rhyme Genie, the world's first dynamic English language rhyming dictionary.ħ. Find suitable rhymes more efficiently with Rhyme Genie’s wordfilter featuring 130,000 parts of speech.Ħ. Rhyme Genie has been chosen to be showcased and gifted to the biggest names in country music at the 45th Annual CMA Awards®.ĥ.Focus on rhymes suitable for lyrics with Rhyme Genie’s one‑of‑a‑kind Songwriter Dictionary compiled from half a million songs. Rhyme Genie has been selected for the official GRAMMY® Gift Bag of the 53rd GRAMMY Awards® given to the performers and presenters of Music’s Biggest Night®.ģ.Enjoy Rhyme Genie’s 10 million phonetic references, automatic detection of stressed syllables, true multi‑syllabic rhymes and intuitive control over the ‘similarity in sound’ between search words and rhyme mates.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed